Update (2/20/18): An Intel spokesperson reached out to us with the following statement to clarify things:

Last week at ISSCC, Intel Labs presented a research paper exploring new circuit techniques optimized for power management. The team used an existing Intel integrated GPU architecture (Gen 9 GPU) as a proof of concept for these circuit techniques. This is a test vehicle only, not a future product. While we intend to compete in graphics products in the future, this research paper is unrelated. Our goal with this research is to explore possible, future circuit techniques that may improve the power and performance of Intel products.

The original article is below…

It looks like Intel is making progress in its development of a discrete GPU architecture. This of course comes in the wake of their hiring of Raja Koduri, AMD’s former Radeon Technologies Group (RTG) head. At the IEEE International Solid-State Circuits Conference (ISSCC) in San Francisco Intel unveiled slides pointing to the direction in which its GPU development is headed. They are essentially scaling up their existing iGPU architecture, and allowing it to sustain high clock speeds better.

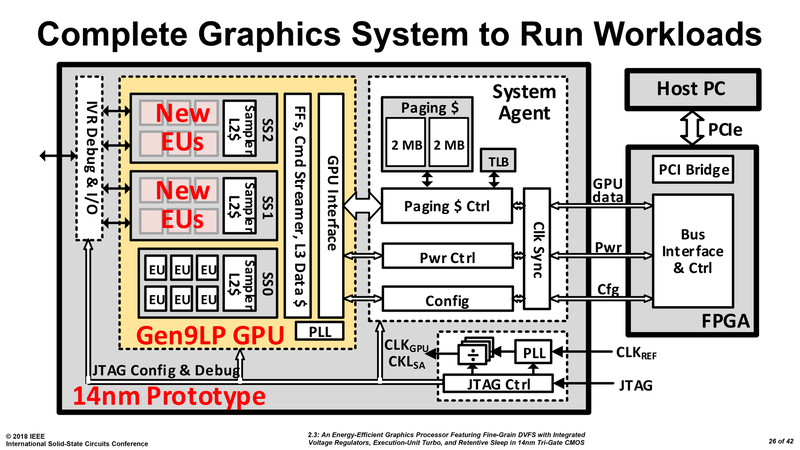

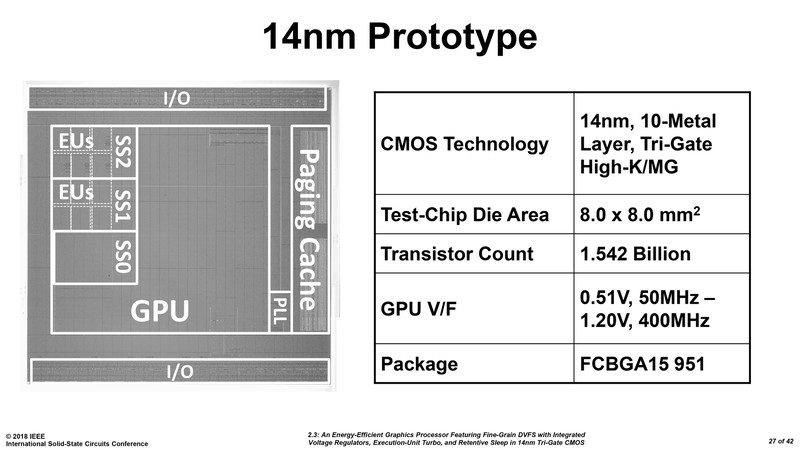

Intel showed a test chip at ISSCC, which is a 14nm dGPU prototype. It is actually a 2-chip solution, with the first chip containing the GPU itself and a system agent. The second chip is a FPGA that interfaces with the system bus.

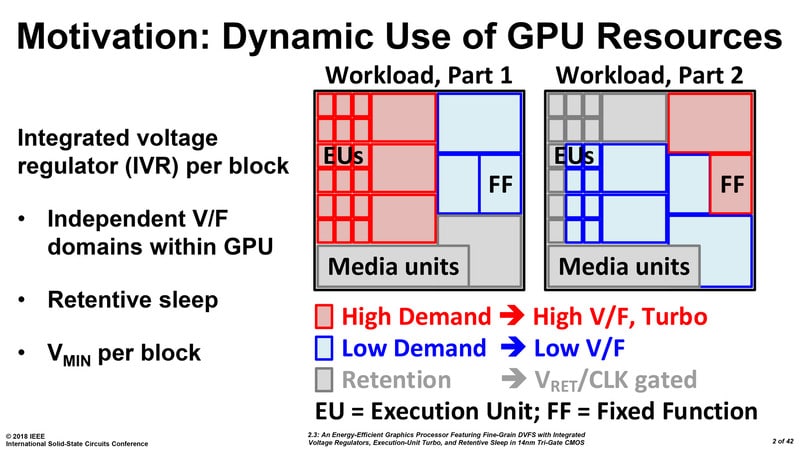

The GPU component is based off Intel’s Gen 9 architecture and feature three execution unit (EU) clusters. But don’t try and get numbers from this configuration as Intel is only trying to demonstrate this as a proof of concept. These three clusters are wired to a power / clock management mechanism that efficiently manages power and clock speed of each individual EU.

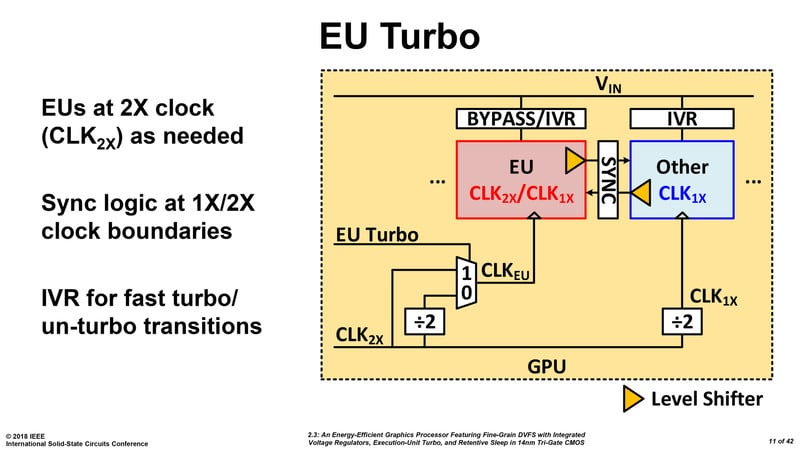

There is also a double-clock feature that doubles clock speeds (of the boost state), which is beyond what current Gen 9 EUs can handle on Intel iGPUs. Once a suitable level of energy efficiency is achieved, Intel will use newer generations of EUs, and scale up EU counts taking advantage of newer fab processes, to develop bigger discrete GPUs.

Check out the rest of the slides below…